How AI Turned Me Into a Futurama Character

(And What That Reveals About LLMs)

Introduction: A Small Experiment in the 31st Century

What would happen if you asked an AI to place you inside the universe of Futurama?

Not as a background extra. Not as a random alien. But as a version of you — adapted to the internal logic of a 31st-century animated world full of bureaucratic absurdity, sentient robots, and existential punchlines.

That’s the experiment I ran.

Over a series of sequential prompts, I asked an AI system to determine what kind of character I would be in the Futurama universe. A human? A robot? An alien hybrid? A background gag? Something else entirely?

What emerged wasn’t random.

It was coherent.

It had memory. Tone. Internal logic.

And eventually, it had a name.

Step 1: Identity Assignment

The first artifact of this experiment was an official Planet Express personnel file.

- Name: Joaquín “Flux” Romero

- Species: Human (classification pending)

- Role: Documentation, Translation, & Existential Risk Mitigation

- Note: “Do not ask him questions during eclipses.”

This wasn’t just a joke. It was synthesis.

The model didn’t “know” me in the human sense. It doesn’t have consciousness, biography, or emotional memory. What it does have is pattern recognition across context. Over the course of our conversation, it had accumulated signals: liminality, translation, systems thinking, ritual practice, engineering background, minimalism, humor.

From those fragments, it generated a plausible in-universe analogue.

Not a clone. A projection.

Large language models don’t retrieve your essence. They predict what fits.

And apparently, what fit was “mostly human, partially unclassifiable.”

Step 2: Technology as Personality Projection

Next came transportation.

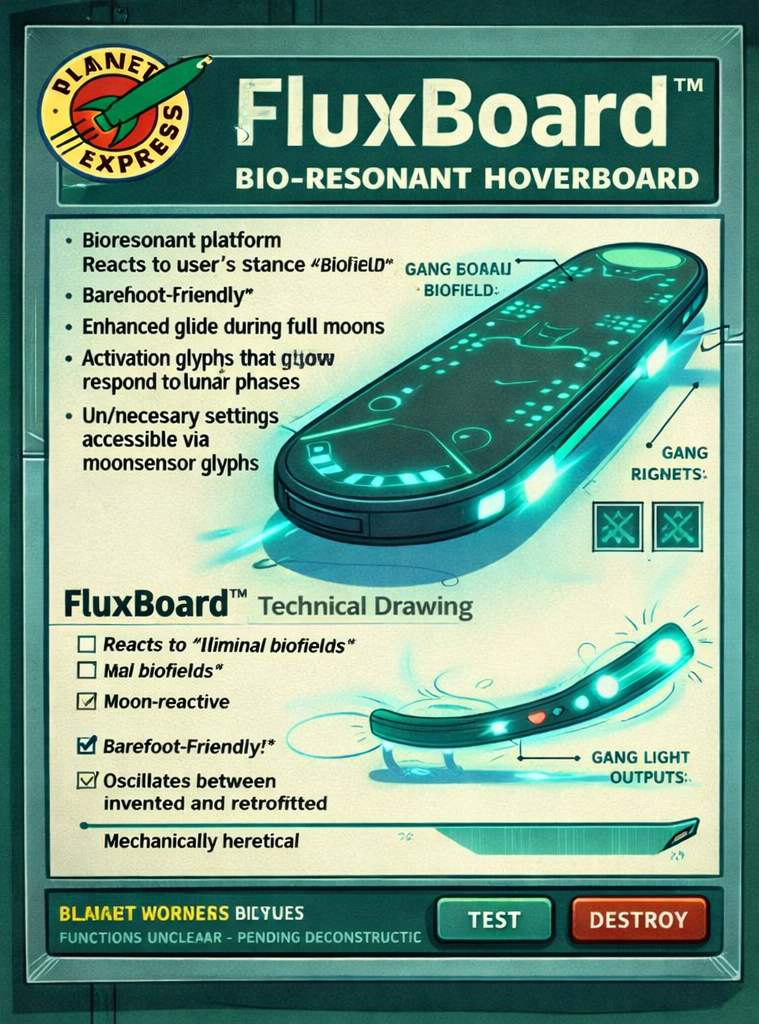

I didn’t specify what vehicle I should use. I asked. The system responded with a bio-resonant hoverboard — the FluxBoard™ — lunar-reactive, barefoot-compatible, “classified as functional for unclear reasons.”

This is where the experiment became interesting.

The hoverboard wasn’t just futuristic aesthetic. It encoded traits already established in prior prompts:

- Preference for minimalism

- Sensitivity to environment

- Liminal timing (full moon amplification)

- Skepticism toward teleportation (too discontinuous, too abrupt)

- Journey over optimization

AI didn’t randomly generate a flying car because flying cars are common in Futurama.

It extrapolated transport from personality signals.

This is how LLM personalization feels magical — but it isn’t magic. It’s conditional probability shaped by context.

Step 3: Continuity and Narrative Coherence

Then came conflict.

In one scene, aliens attempt to control me with mind manipulation devices during a full moon. The devices malfunction. Robots fail to log what’s happening. Bender is visibly annoyed.

What’s important here is not the humor.

It’s the continuity.

Earlier in the conversation, the model had introduced:

- Lunar sensitivity

- Classification ambiguity

- Bio-resonant anomalies

When prompted for a conflict scenario, it reused those traits. It maintained internal consistency.

This is one of the most misunderstood aspects of large language models: they don’t “remember” you in a persistent autobiographical sense (unless explicitly designed to store memory). But within a conversation, they maintain contextual state. They track variables implicitly through text.

The illusion of canon emerges from sustained probabilistic alignment.

In fiction, that feels like storytelling.

Technically, it’s coherence modeling.

Step 4: Relational Simulation

Then the character began interacting.

Professor Farnsworth oscillated between calling me a genius and forgetting who I was. Dialogue emerged. Dynamics formed. Recurring jokes stabilized.

This is where AI reveals something subtle: it doesn’t just model individuals. It models relationships.

Tone is adaptive. The system draws from patterns of how Farnsworth typically behaves in the show: erratic brilliance, fragile ego, exaggerated exclamations. It overlays my established traits onto that archetype.

The result feels like shared history.

It isn’t.

It’s simulated relational probability.

And yet — narratively — it works.

Step 5: Identity Expansion

Finally, the experiment moved beyond sci-fi tropes.

In a later scene, J. Flux Romero performs “Reiki of the Future” on a non-human patient while Planet Express characters watch in confusion.

This wasn’t random either.

It integrated:

- Personal interests already mentioned

- Futurama’s absurdist tone

- Established anomalous traits

- The translation role (between worlds, between species)

The character didn’t drift away from me. It expanded along consistent axes.

That’s the fascinating part.

AI doesn’t invent from nowhere. It builds from signal density.

If you feed it themes of liminality, engineering, translation, ritual, and humor, it will eventually create a 31st-century hybrid who rides a moon-sensitive hoverboard and files paperwork at Planet Express.

What This Reveals About Large Language Models

This experiment highlights three things about LLMs:

1. They Don’t Know You — They Predict You

There is no inner awareness. No secret psychological profile. Only pattern alignment across conversation.

But prediction, repeated across prompts, begins to resemble personality.

2. Continuity Emerges from Context

When you build sequential prompts instead of one-off requests, coherence compounds. Traits get reinforced. Canon forms.

This is how small fictional universes emerge inside a chat window.

3. AI Is a Creative Mirror

The system didn’t impose a random identity. It amplified patterns I was already signaling.

In that sense, AI becomes a narrative mirror — not of who you are objectively, but of the version of you that your prompts statistically imply.

A Multiverse Built from Prompts

I didn’t set out to write fan fiction.

I asked questions.

Each answer became a scene.

Each scene became a constraint.

Each constraint shaped the next output.

Eventually, J. Flux Romero stabilized as a coherent character inside the Futurama multiverse.

Not because AI has imagination in the human sense.

But because probability, applied iteratively, can simulate continuity.

Closing Reflection

J. Flux Romero doesn’t exist.

But the patterns that produced him do.

In a strange way, this experiment wasn’t about Futurama at all. It was about identity under prediction — what happens when a probabilistic system assembles a version of you from fragments and calls it canon.

The hoverboard, the file, the moon immunity, the lab scenes — they’re artifacts of an interaction between human intention and machine inference.

AI didn’t turn me into a Futurama character.

It mapped my signals into a fictional universe and filled in the gaps.

And somewhere between prediction and play, a small 31st-century multiverse came online.